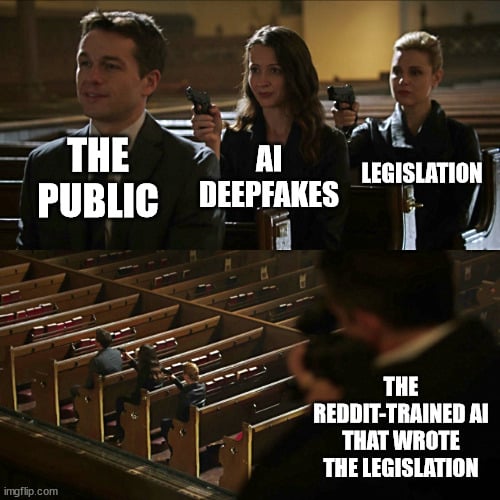

Shall we trust LM defining legal definitions, deepfake in this case? It seems the state rep. is unable to proof read the model output as he is “really struggling with the technical aspects of how to define what a deepfake was.”

The problem is that tools use to detect AI writing are not accurate. At the end of the day as long as the information is worded correct and the information is correct that’s that matters. When you have AI write an argument to cases that don’t exist as a defense lawyer… that’s when theirs problems

This chud uploaded potentially sensitive information to a public service. People really need education on how to intelligently use these services.

🙊 and the group think nonsense continues…

Y’all know those grammar checking thingies? Yeah, same basic thing. You know when you’re stuck writing something and your wording isn’t quite what you’d like? Maybe you ask another person for ideas; same thing.

Is it smart to ask AI to write something outright; about as smart as asking a random person on the street to do the same. Is it smart to use proprietary AI that has ulterior political motives; things might leak, like this, by proxy. Is it smart for people to ask others to proof read their work? Does it matter if that person is a grammar checker that makes suggestions for alternate wording and has most accessible human written language at its disposal.

But the legislature did proofread it so I’m not seeing the issue here?

That being said his implication that getting someone with expertise to draft it up is as good or even worse is very boneheaded. “AI” can’t replace subject matter experts (maybe yet?)

I don’t see any issue whatsoever in what he did. The model can draw meaning across all human language in a way humans are not even capable of doing. I could go as far as creating a training corpus based on all written works of the country’s founding members and generate a nearly perfect simulacrum that includes much of their personality and politics.

The AI is not really the issue here. The issue is how well the person uses the tool available and how they use it. By asking it for writing advice for word specificity, it shouldn’t matter so long as the person is proof reading it and it follows their intent. If a politician’s significant other writes a sentence of a speech, does it matter. None of them write their own sophist campaign nonsense or their legislative works.

And yet again it cynically amuses me that AI has become “artificial” intelligence in the sense of “fake.”

It’s a shabby substitute for real intelligence, used by people who don’t possess any of their own to impress other people who don’t possess any of their own.

That’s a use. But not their only use.

This is actually true.

Most notably to me, the ability to sift through and collate enormous amounts of data has led to surprising things like diagnosing diabetes through retinal scans.

But those sorts of things, beneficial and impressive though they might be, remain at the fringe of AI research for the simple reason that those sorts of uses are too niche to provide the revenue stream that all of the bubble-building corporate parasites demand. Their focus is on the AI-as-a-substitute-for-real-intelligence aspect (and increasingly “AI” as just a meaningless marketing buzzword), since that’s where the money is. And unfortunately but not coincidentally, that’s where most of the public attention is too.

The Speznast.

State Senator adjusts bifocals

“What the hell is a poop knife?”

The stupid. It hurts.

Yeah, side hurts. 🤣

He argues that any shortcomings associated with using ChatGPT to write part of a law would also be present if humans take the reins. Kolodin said he didn’t see any pitfalls “that I don’t also see with relying on legislative attorneys to draft up legislation.”

Last I checked humans carried 100% of the liability.

I understand the irony. But can we not pretend they blindly used an output or even generated a full page. It was a specific section to provide a technical definition of “what is a deepfake”.

“I was really struggling with the technical aspects of how to define what a deepfake was. So I thought to myself, ‘Well, why not ask the subject matter expert (i do not agree with that wording, lol) , ChatGPT?’” Kolodin said.

The legislator from Maricopa County said he “uploaded the draft of the bill that I was working on and said, you know, please, please put a subparagraph in with that definition, and it spit out a subparagraph of that definition.”

“There’s also a robust process in the Legislature,” Kolodin continued. “If ChatGPT had effed up some of the language or did something that would have been harmful, I would have spotted it, one of the 10 stakeholder groups that worked on or looked at this bill, the ACLU would have spotted, the broadcasters association would have spotted it, it would have got brought out in committee testimony.”

But Kolodin said that portion of the bill fared better than other parts that were written by humans. “In fact, the portion of the bill that ChatGPT wrote was probably one of the least amended portions,” he said.

I do not agree on his statement that any mistakes made by ai could also be made by humans. The reasoning and errors in reasoning is quite different in my experience but the way chatgpt was used is absolutely fair.

No kidding. When I read that, my first thought was, “He’s clearly at least above the median intelligence of his fellow Arizona GOP reps, if not in the top 10% of their entire conference”

Anyone who read the article AND has experience with the Arizona GOP, probably thought the same thing.

The Arizona GOP collects some of the dumbest people alive.