I recently held a science slam about this topic! It’s a mix of the first computer scientists being mathematicians, who love their abbreviations, and limited screen size, memory and file size. It’s a trend in computing that has been well justified in the past, but has been making it harder for people to work together. And the need to use abbreviations has completely gone with the age of auto completion and language servers.

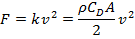

Man, I hate that so much. I swear this was half the reason I struggled with maths and physics, that these guys need to write this:

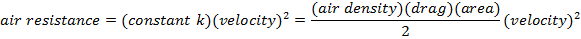

Rather than this:

At some point, they even collectively decided that not having to write a multiplication dot is more important than being able to use more than a single letter for your variables. Just what the fuck?

Yep, that’s what it usually boils down to. However, I think a slight approach shift for basic materials could be useful, where introductory books / papers / … write out formulas. That makes it easier to understand the basic concepts before moving onto the more complex stuff. It should be easy to create such works, as they are usually created digitally, and autocomplete is available. Students can and will abbreviate those written outs words by themselves (after all, writing is annoying), but IMO reading comprehension is the key part that can be improved.

Also, when doing long formulas that you want to eliminate members of, writing stuff out can be a nightmare.

Bruh how large should our notebook pages be? Also how should we speak about the equation?

What if the terms should be represented in a matrix? What if mathematical functions e^x, sin, ln etc. are present? Would you write sine of e^(velocity of the particle B) ? Notations are necessary for readability

I don’t know what to tell you. They obliterate readability for me.

I also genuinely believe these shorthands hinder access to research for the 99.9% of humanity who are not experts in the given field. Obviously, you do need to understand the context to use a formula correctly, but that also becomes harder when everything is written with hieroglyphs.

In university, I had to assess this paper. It took me 3 weeks to decipher that alien language, and it doesn’t even say anything particularly riveting.

To address your points:

I’m hoping that at least published math can be typed out with full names.

I’m not opposed to local aliases. E.g. if the point is that some values in the matrix are negative and others not, then absolutely write “with air_resistance as ‘a’, the catapultation matrix is { a, -a, -a, … }”.

I don’t actually want to introduce spaces into variable names, that’s just an example I randomly found online. I was rather thinking e.g. sine(euler^velocity_b).

Bonus point: You can reasonably type it on a computer, because you don’t need Greek letters and subscripts anymore.

Btw i am all for local aliases. I see them most of the times.

i.e, [equation], where a = area of the surface,

v= velocity,…

But without short codes it would be a pain to write and remember. Some of the shortening like del operator really reallh simplifies the original expression with better showcase of physical meaning, but looks alien to people who don’t know. But we can’t stop using it since it makes everything else difficult for people in that area

What OP is talking about is readability, so in a situation where you’re taking your own notes and have your own set of defined symbols, full words aren’t necessary.

I personally lost all interest in math because there are way too many opinionated or non-standard symbol definitions

I personally lost all interest in math because there are way too many opinionated or non-standard symbol definitions

That seems like a strange reason to quit math since most symbols are pretty well agreed upon, and maths has little to do with the actual notation either way.

I should’ve said “anything math-heavy,” but even then, it seems like switching fields or applications of math requires understanding a new definition of the same symbols, and a lot of that could be avoided with words.

I mean, if you get into any real depth with math, you are going to reach a point where you can’t use conveniently use words to describe the symbols being manipulated.

As an example for the math I am doing literally right now, I very much prefer using C+R compared to “semi circular arc in the upper half of the complex plane with radius R”, or M+(f(z)) which means “Maximum of the magnitude of the function f(z) over C+R”, which if I were to write out in full, would just become a clusterfuck.

Also you still wouldn’t be able to get rid of symbols because some symbols are placeholders and straight up don’t have any meaning in natural language. This occurs often in physics as well, not just pure maths. For example, the laplace transform of any function is written as a variable of “s”, but “s” doesn’t have a clear meaning (at least as far as I know).

It’s been really holding me back in learning coding. I felt pretty comfortable at first learning javascript, but as I got further the code was increasingly hard to look back to and understand, to the point I had to spend a lot of time understanding my own code.

Does it truely matter after the code has been compiled if it has more full words or not?

It matters as soon as a requirement change comes in and you have to change something. Writing a dirty ass incomprehensible, but working piece of code is ok, as long as no one touches it again.

But as soon as code has to be reworked, worked on together by multiple people, or you just want to understand what you did 2 weeks earlier, code readability becomes important.

I like Uncle Bobs Clean Code (with a grain of salt) for a general idea of what such an approach to make code readable could look like. However, it is controversial and if overdone, can achieve the opposite. I like it as a starting point though.

I recently held a science slam about this topic! It’s a mix of the first computer scientists being mathematicians, who love their abbreviations, and limited screen size, memory and file size. It’s a trend in computing that has been well justified in the past, but has been making it harder for people to work together. And the need to use abbreviations has completely gone with the age of auto completion and language servers.

Man, I hate that so much. I swear this was half the reason I struggled with maths and physics, that these guys need to write this:

Rather than this:

At some point, they even collectively decided that not having to write a multiplication dot is more important than being able to use more than a single letter for your variables. Just what the fuck?

Try to write the above with pen and ink and then tell me if you can read it back yourself.

Single letters is not a good system but it was the less bad one.

I don’t have to write with pen and paper anymore though

Yep, that’s what it usually boils down to. However, I think a slight approach shift for basic materials could be useful, where introductory books / papers / … write out formulas. That makes it easier to understand the basic concepts before moving onto the more complex stuff. It should be easy to create such works, as they are usually created digitally, and autocomplete is available. Students can and will abbreviate those written outs words by themselves (after all, writing is annoying), but IMO reading comprehension is the key part that can be improved.

Also, when doing long formulas that you want to eliminate members of, writing stuff out can be a nightmare.

/s?

Nope.

Bruh how large should our notebook pages be? Also how should we speak about the equation? What if the terms should be represented in a matrix? What if mathematical functions e^x, sin, ln etc. are present? Would you write sine of e^(velocity of the particle B) ? Notations are necessary for readability

I don’t know what to tell you. They obliterate readability for me.

I also genuinely believe these shorthands hinder access to research for the 99.9% of humanity who are not experts in the given field. Obviously, you do need to understand the context to use a formula correctly, but that also becomes harder when everything is written with hieroglyphs.

In university, I had to assess this paper. It took me 3 weeks to decipher that alien language, and it doesn’t even say anything particularly riveting.

To address your points:

Bonus point: You can reasonably type it on a computer, because you don’t need Greek letters and subscripts anymore.

Btw i am all for local aliases. I see them most of the times.

i.e, [equation], where a = area of the surface, v= velocity,…

But without short codes it would be a pain to write and remember. Some of the shortening like del operator really reallh simplifies the original expression with better showcase of physical meaning, but looks alien to people who don’t know. But we can’t stop using it since it makes everything else difficult for people in that area

I would have quit math if I had to do algebra with names instead of letters.

What OP is talking about is readability, so in a situation where you’re taking your own notes and have your own set of defined symbols, full words aren’t necessary.

I personally lost all interest in math because there are way too many opinionated or non-standard symbol definitions

That seems like a strange reason to quit math since most symbols are pretty well agreed upon, and maths has little to do with the actual notation either way.

I should’ve said “anything math-heavy,” but even then, it seems like switching fields or applications of math requires understanding a new definition of the same symbols, and a lot of that could be avoided with words.

I mean, if you get into any real depth with math, you are going to reach a point where you can’t use conveniently use words to describe the symbols being manipulated.

As an example for the math I am doing literally right now, I very much prefer using C+R compared to “semi circular arc in the upper half of the complex plane with radius R”, or M+(f(z)) which means “Maximum of the magnitude of the function f(z) over C+R”, which if I were to write out in full, would just become a clusterfuck.

Also you still wouldn’t be able to get rid of symbols because some symbols are placeholders and straight up don’t have any meaning in natural language. This occurs often in physics as well, not just pure maths. For example, the laplace transform of any function is written as a variable of “s”, but “s” doesn’t have a clear meaning (at least as far as I know).

It’s been really holding me back in learning coding. I felt pretty comfortable at first learning javascript, but as I got further the code was increasingly hard to look back to and understand, to the point I had to spend a lot of time understanding my own code.

Does it truely matter after the code has been compiled if it has more full words or not?

It matters as soon as a requirement change comes in and you have to change something. Writing a dirty ass incomprehensible, but working piece of code is ok, as long as no one touches it again.

But as soon as code has to be reworked, worked on together by multiple people, or you just want to understand what you did 2 weeks earlier, code readability becomes important.

I like Uncle Bobs Clean Code (with a grain of salt) for a general idea of what such an approach to make code readable could look like. However, it is controversial and if overdone, can achieve the opposite. I like it as a starting point though.