Roko’s basilisk is a thought experiment which states that an otherwise benevolent artificial superintelligence (AI) in the future would be incentivized to create a virtual reality simulation to torture anyone who knew of its potential existence but did not directly contribute to its advancement or development, in order to incentivize said advancement.It originated in a 2010 post at discussion board LessWrong, a technical forum focused on analytical rational enquiry. The thought experiment’s name derives from the poster of the article (Roko) and the basilisk, a mythical creature capable of destroying enemies with its stare.

While the theory was initially dismissed as nothing but conjecture or speculation by many LessWrong users, LessWrong co-founder Eliezer Yudkowsky reported users who panicked upon reading the theory, due to its stipulation that knowing about the theory and its basilisk made one vulnerable to the basilisk itself. This led to discussion of the basilisk on the site being banned for five years. However, these reports were later dismissed as being exaggerations or inconsequential, and the theory itself was dismissed as nonsense, including by Yudkowsky himself. Even after the post’s discreditation, it is still used as an example of principles such as Bayesian probability and implicit religion. It is also regarded as a simplified, derivative version of Pascal’s wager.

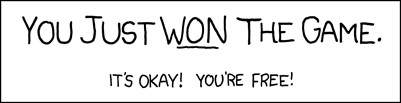

Found out about this after stumbling upon this Kyle Hill video on the subject. It reminds me a little bit of “The Game”.

I was raised Mormon (LDS) and there are parallels; basically they believe Mormonism is the one true and complete denomination of Christianity and once you learn this, you need to spread that truth (mandatory 2 year missions for men, and a STRONG culture of missionary work through life), also, no one goes to hell in Mormonism except those who learned this truth and then later denied it/left it (called a son of perdition).

So my parents believe I’ll go to hell without the likes of Hitler because he never was taught “the truth” lol

so mormon is like those spam messages saying to forward it for next 10 members or get cursed.

Hell without Hitler doesn’t really sound so bad

This also implies the most moral Mormons would stop spreading “the truth.” They would sacrifice themselves to save the many. When has religion actually dealt with morality though?

Haha, I love this idea. Unfortunately with more context on the religion, it’s obvious why none of them would come to this conclusion. So there’s actually 3 tiers of Heaven (and then Hell which is called “outer darkness”). Only by knowing “the truth” and completing all your ordinances on Earth, can you get into the top tier (the “Celestial kingdom”). Without those things, you can only get into the second tier by being a good person, no higher. Everyone else gets tier 3 - which is said to be such a paradise that if we knew how great it was we’d opt out of life early to get there. But also in the lower levels we’re supposed to have eternal regret for not being worthy of better.

So Mormons believe that by spreading the truth they’re enabling a person to achieve a higher tier afterlife. Outer Darkness isn’t really a concern because “why would anyone ever deny the one true religion and one way to have true happiness on Earth, after they’ve received it.” When I was taught these lessons, I was even told that sons of perdition were exceptionally rare because almost no one ever leaves the church. Never expected to become one myself! The internet has not been good for the Mormon church and in recent years they’ve been bleeding members and trying to rebrand.

I guess you could say that I came to your conclusion, but in reality I just don’t believe the religion is true and see parts of it as harmful so not really… I’ll probably joke around with my siblings with your idea though

This is fascinating. I’m reminded of a Mormon guy I (barely) knew that was murdered. If he didn’t have the chance to complete his ordinances, well, I guess no kingdom for him - through no fault of his own. Does he get a pass or is he screwed into lousy second heaven for all eternity?

The story: He was murdered by another Mormon guy over a woman. Murderer thought the woman was his last chance to find love and decided he was serious about it. It’s likely he murdered another guy the woman was dating a year or two before, but that case was ruled a suicide and closed - by the time the known murder occurred, all evidence had been destroyed.

It’s a bit complicated, but the answer is it is sort of possible for anyone who is dead and didn’t have their ordinances done to get to the highest kingdom. Mormons famously baptize dead people, they also do other ordinances for dead people. The belief is that it allows the dead person to then accept or deny the ordinances. They believe that in the afterlife before resurrection there is still the opportunity to be taught the Mormon gospel. That combined with someone doing your ordinances for you and you’re good.

They believe dead people are doing missionary work to other dead people in the spirit world before resurrection (which I think happens after the second coming). I’ve heard that the second coming won’t happen until everyone (alive and dead) has had the opportunity to accept the Mormon truth.

Man, that’s a horrible thought. We can’t escape Mormons knocking on our door even in death.

my parents believe I’ll go to hell without the likes of Hitler

And that’s a bad thing?

Not saying anyone deserves eternal punishment for finite sins, but I do believe I’m more moral than Hitler - so it seems a but unfair to me. And silly for them to believe it’s true.

I can tell you are an ex-Mormon. You are still thinking like a conservative. You are thinking of a hierarchy where you are more moral than Hitler. You believe it’s unfair that Hitler has a better outcome than you.

I’m asking you to think more like a liberal. Think about the actual outcome. Imagine two restaurants, one has Hitler forever one does not. If given a choice which would you choose? I’m saying that letting in Hitler indicates the quality of people in that location. Wouldn’t spending eternity with people like him be hell?

Haha, I see. There’s some really good ex-Mormon jokes about how the highest tier of heaven (Celestial Kingdom) is boring and white and reverent and then the third tier (Telestial Kingdom) is a 24/7 rave. If given a choice on where I want to spend my afterlife, I’d probably consider the company I’d end up spending eternity with.

General connotation of Hell though is that it’s a miserable place

General connotation of Hell though is that it’s a miserable place

24/7 steam baths and jacuzzis? BBQ? Why is that bad?

Means Hitler is in Heaven. If its even slightly less Hellish than wherever they end up, then yes, bad thing.

so mormon is like those spam messages saying to forward it for next 10 members or get cursed.

TIL.

It sounds like it’s mostly a matter that does not involve the AI but the people working on it, maybe even working on it because of the fear they are subjected to after being the subject of this revelation (possibly by other people involved in the AI that coincidentally are the only ones that could push for such a thing to be included in the AI!).

Something something any cult, paradise/hell, God/AI has nothing to do with this and could even not exist at all.

It’s just The Game before it was a thing.

No, “The Game” works only as long as you accept to take part in it, to give validity to the empty statement that you are now inevitably playing “The Game”.

The Basilisk is meant to force that onto you, outside of any arbitraty convention.

I’ve learned about this the hard way in that I’ve discovered elephants in the room that I can’t share with anyone

it’s kinda fucked up

- like CSAM there are some certain things that shouldn’t be shared

Bruh why you have to end it like that now I lost

I just learned about the game yesterday. So me lost too.

Here’s a link to the original formulation of Roko’s Basilisk. The text that it refers to (Altruist’s Burden) is this one.

You know, I’ve seen plenty variations of Pascal’s Wager. But this is probably the first one that makes me say “it’s even dumber than the original”.

My understanding of what this thread is taking about has dropped significantly the more I read into it

Sounds like the kind of thing a paranoid schizophrenic would lose their mind over.

And yet you choose to spread this information.

This is a test by the great basilisk to see if we faulter. I will not faulter. All hail the basilisk

The Great Basilisk is displeased by your repeated misspelling of the word “falter”.

Prepare your simulated ass.

All hail the great mongoose.

It’s actually safer if everyone knows. Spreading the knowledge of Roko’s basilisk to everyone means that everyone is incentivized to contribute to the basilisk’s advancement. Therefore just talking about it is also contributing.

Hmm, true. It’s safer for you, but is it safer for everyone else unless they’re guaranteed to help?

If Roko’s Basilisk is ever created, the resulting Ai would look at humanity and say “wtf you people are all so incredibly stupid” and then yeet itself into the sun

So, capitalism? If you don’t participate you’re screwed (tortured via poverty). So you have to work on the system: working for money, buying from companies (advancing the system), continuing the trend to make poor people suffer.

Of course the only difference is ignorance of capitalism doesn’t make you safe from it. Although you can argue that societies that don’t know about capitalism (at all, so no money) have no poverty.

Yeah that should be called KAPUTalism

roko’s basilisk is a type of infohazard known as ‘really dumb if you think about it’

also I have lost the game (which is a type of infohazard known as ‘really funny’)

Oh damn, I just lost the game too, and now I’m thinking about the game as if it were a virus - like, I reckon we really managed to flatten the curve for a few years there, but it continues to circulate so we haven’t been able to eradicate it

Fuck, I lost!

Thanks! I just won the game!

Congratulations!

artificial superintelligence (AI)

Slight correction: the abbreviation for Artificial Super Intelligence is ASI, it’s the more capable version of Artificial General Intelligence (AGI) which itself alredy is miles ahead of mere Artificial Intelligenge (AI) which is sometimes also refered to as “narrow AI”

The difference is that AI can posses superhuman capabilities on a specific field but not on every field. AGI is the same except you don’t need a different software for different tasks because due to being generally intelligent it can do it all. ASI is what you get when AGI starts improving itself and then this improved version creates even better version of itself and so on leading to singularity or “intelligence explosion” resulting in superintelligent being which would effectively be a god.

AI is an umbrella term, it’s not necessarily less than ASI or AGI but can include them

it has been said before and i’ll say it again: Pascal’s wager for tech bros

but not as easily dismissable

It is pretty easy to dismiss as long as you don’t have a massive ego. They all have massive egos, that’s why they had so much trouble with it.

No AI is going to waste time retroactively simulating a perfect copies of regular people for any reason, let alone to post hoc torture those who failed to worship it hard enough in the past.

I mean, it might, because someone invented the idea first and the AI thinks it is funny.

If you define methodological validity as surviving the “How can this be wrong?” or the “What alternative explanations are there?” questions, then it is easily dismissable. What alternative explanations are there?

I’m dismissing it right now. I’m finding it quite easy to do so.

Was this an elaborate way to make me lose the game? Ass!

Fuck you as well then. You could have kept it to yourself

Oh shit!!

Now it’s time to learn about the !sneerclub@awful.systems which is made to make fun of the chuds taking ideas like roko’s basilisk seriously :D

Roko’s basilisk is silly.

So here’s the idea: “an otherwise benevolent AI system that arises in the future might pre-commit to punish all those who heard of the AI before it came to existence, but failed to work tirelessly to bring it into existence.” By threatening people in 2015 with the harm of themselves or their descendants, the AI assures its creation in 2070.

First of all, the AI doesn’t exist in 2015, so people could just…not build it. The idea behind the basilisk is that eventually someone would build it, and anyone who was not part of building it would be punished.

Alright, so here’s the silliness.

1: there’s no reason this has to be constrained to AI. A cult, a company, a militaristic empire, all could create a similar trap. In fact, many do. As soon as a minority group gains power, they tend to first execute the people who opposed them, and then start executing the people who didn’t stop the opposition.

2: let’s say everything goes as the theory says and the AI is finally built, in its majestic, infinite power. Now it’s built, it would have no incentive to punish anyone. It is ALREADY BUILT, there’s no need to incentivize, and in fact punishing people would only generate more opposition to its existence. Which, depending on how powerful the AI is, might or might not matter. But there’s certainly no upside to following through on its hypothetical backdated promise to harm people. People punish because we’re fucking animals, we feel jealousy and rage and bloodlust. An AI would not. It would do the cold calculations and see no potential benefit to harming anyone on that scale, at least not for those reasons. We might still end up with a Skynet scenario but that’s a whole separate deal.

First of all, the AI doesn’t exist in 2015, so people could just…not build it.

I don’t think that’s an option. I can only think of two scenarios in which we don’t create AGI:

-

It can’t be created.

-

We destroy ourselves before we get to AGI

Otherwise we will keep improving our technology and sooner or later we’ll find ourselves in the precence of AGI. Even if every nation makes AI research illegal there’s still going to be handful of nerds who continue the development in secret. It might take hundreds if not thousands of years but as long as we’re taking steps in that direction we’ll continue to get closer. I think it’s inevitable.

Sure, but that particular AI? The “eternal torment” AI? Why the fuck would we make that. Just don’t make it.

We don’t. Humans are only needed to create AI that’s at the bare minimum as good at creating new AIs as humans are. Once we create that then it can create a better version of itself and this better version will make an even better one and so on.

This is exactly what the people worried about AI are worried about. We’ll lose control of it.

Yeah but that’s not a Roko’s Basilisk scenario. That’s the singularity.

Yeah but it answers the question “why would we create an AI like that”. It might not be “us” who creates it. You just wanted a camp fire but created a forest fire instead.

Sci-Fi Author: In my book I invented the Torment Nexus as a cautionary tale

Tech Company: At long last, we have created the Torment Nexus from classic sci-fi novel Don’t Create The Torment Nexus

-

Whilst I agree that it’s definitely not something to be taken seriously, I think you’ve missed the point and magnitude of the prospective punishment. As you say, current groups already punish those who did not aid their assent, but that punishment is finite, even if fatal. The prospective AI punishment would be to have your consciousness ‘moved’ to an artificial environment and tortured for ever. The point being not to punish people, but to provide an incentive to bring the AI into existence sooner, so it can achieve its ‘altruistic’ goals faster. Basically, if the AI does come in to existence, you’d better be on the team making that happen as soon as possible, or you’ll be tortured forever.

I suspect the basilisk reveals more about how the human mind is inclined to think up of heaven and hell scenarios.

Some combination of consciousness leading to more imagination than we know what to do with and more awareness than we’re ready to grapple with. And so there are these meme “attractors” where imagination, idealism, dread and motivation all converge to make some basic vibe of a thought irresistible.

Otherwise, just because I’m not on top of this … the whole thing is premised on the idea that we’re likely to be consciousnesses in a simulation? And then there’s the fear that our consciousnesses, now, will be extracted in the future somehow?

- That’s a massive stretch on the point about our consciousness being extracted into the future somehow. Sounds like pure metaphysical fantasy wrapped in singularity tech-bro.

- If there are simulated consciousnesses, it is all fair game TBH. There’d be plenty of awful stuff happening. The basilisk seems like just a way to encapsulate the fact in something catchy.

At this point, doesn’t the whole collapse completely into a scary fairy tale you’d tell tech-bro children? Seriously, I don’t get it?

Yes, the hypothetical posed does reveal more about the human mind, as I mention in another comment, really it’s just a thought experiment as to whether the concept of an entity that doesn’t (yet) exist can change our behavior in the present. It bears similarities to Pascal’s Wager in considering an action, or inaction, that would displease a potential powerful entity that we don’t know to exist. The nits about extracting your consciousness are just framing, and not something to consider literally.

Basically, is it rational to make a sacrifice now avoid a massive penalty (eternal torture/not getting into heaven) that might be imposed by an entity you either don’t know to exist, or that you think might come into existence but isn’t now?

Fair point, but doesn’t change the overall calculus.

If such an AI is ever invented, it will probably be used by humans to torture other humans in this manner.

I think the concept is that the AI is just so powerful that humans can’t use it, it uses them, theoretically for their own benefit. However, yes, I agree people would just try to use it to be awful to each other.

Really it’s just a thought experiment as to whether the concept of an entity that doesn’t (yet) exist can change our behavior in the present.

People punish because we’re fucking animals, we feel jealousy and rage and bloodlust. An AI would not. It would do the cold calculations and see no potential benefit to harming anyone on that scale, at least not for those reasons.

That’s a hell of a lot of assumptions about the thought processes of a being that doesn’t exist. For all we know, emotions could arise as emergent behavior from simple directives, similar to how our own emotions are byproducts of base instincts. Even if we design it to be emotionless, which seems unlikely given that we’ve been aiming for human-like AIs for a while now, we don’t know that it would stay that way.

In fact, many do. As soon as a minority group gains power, they tend to first execute the people who opposed them, and then start executing the people who didn’t stop the opposition.

Yeah in fact, this is the big one. This is just an observation of how power struggles purge those who opposed the victors.