Hot take: Good for them.

This will have zero impact on 99% of independent developers. Most small companies can move to an alternative or roll their own infrastructure. This will only really impact large corporations. I’m all for corporation-on-corporation violence. Let them fight.

This is a different take on the VMscare broadcom purchase.

The real losers here are SoHos where it is too pricy to migrate and also too pricy not to. I don’t know whether that’s in your 1% or 99% but:

- devs don’t develop for infrastructure their customers don’t use. It’s as dead as LKC, then.

- big customers have deprecated their VMware infra and are only spending on replacement products, and if they do the same for docker the company will suffer in a year.

If docker doesn’t have the gov/mil revenue, are we prepared for the company shedding projects and people as it shrinks?

Remember: when tech elephants fight, it’s we the grass who suffers.

Enshitification is a very, very real thing. GitLab did something similar with raising pricing by 5x a few years back.

you didn’t need anything like docker with web 1.0; you just needed cuteftp and a text editor.

Surely you mean WS_FTP LE.

Me, still using winscp for random nonsense.

Their entire offering is such a joke. I’m forced to use Docker Desktop for work, as we’re on Windows. Every time that piece of shit gets updated, it’s more useless garbage. Endless security snake oil features. Their installer even messes with your WSL home directory. They literally fuck with your AWS and Azure credentials to make it more “convenient” for you to use their cloud integrations. When they implemented that, they just deleted my AWS profile from my home directory, because they felt it should instead be a symlink to my Windows home directory. These people are not to be trusted with elevated privileges on your system. They actively abuse the privilege.

The only reason they exist is that they are holding the majority of images hostage on their registry. Their customers are similarly being held hostage, because they started to use Docker on Windows desktops and are now locked in. Nobody gives a shit about any of their benefits. Free technology and hosting was their setup, now they let everyone bleed who got caught. Prices will rise until they find their sweet spot. Thanks for the tech. Now die already.

I switched to running docker inside wsl2 (installed as per their docs) and so far it’s been working well.

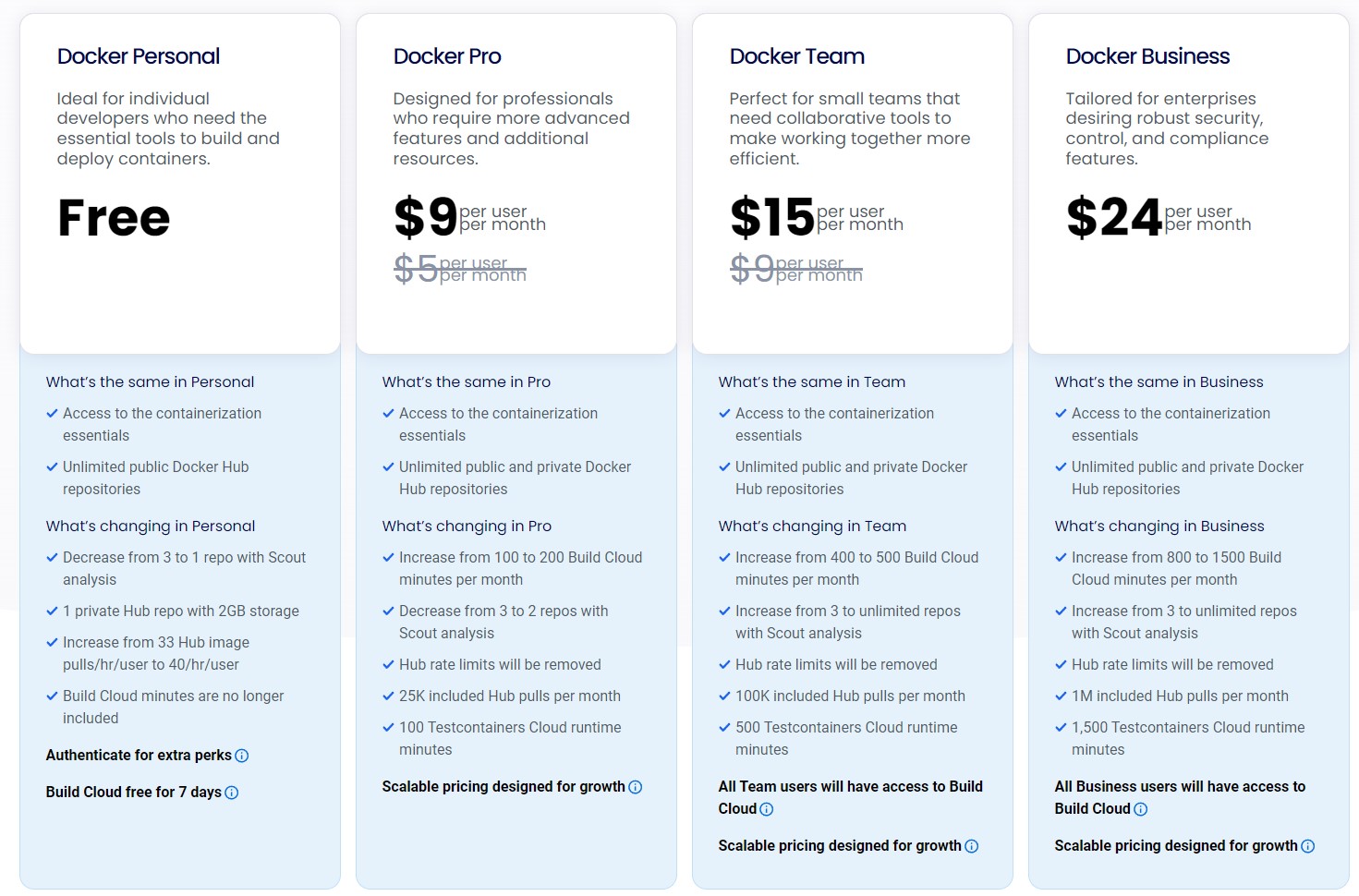

I actually thought this headline was a joke (i.e. adding 80% of 0 to 0 equals 0), until I clicked the link to see that people actually pay for Docker? I guess this is for Enterprise?

I have never really had much use for it, so never have installed it, but it seems like everyone here uses Docker, which is surprising given the cost and what you just said.

This speaks to my soul so much. I started at a non profit 2 years ago and it pains me how much the company spends on Oracle and docker now and no one does anything about it. So much of our infrastructure is built to rely on these things that we can’t just do without them when they do crazy shit like this. And Oracle and docker can afford to do this as long as a few cash cows hang on like us. Hostage is the worst and best description.

Dev containers have been dead for a long time.

Docker is not only about dependency management. It also offers service “composing”, via

docker compose, and network isolation for each service.Although I personally love Nix, and I run NixOS on some of my servers, I do not believe it can replace Docker/Podman. Unless you go the NixOS Containers route.

Interfaces,vlans and capable gateway. Except instead of the vendor lock in you have access to the gold standards of which all out scale

Maybe to the two dozen nix users. To all others dev containers are very well alive.

Lol. Its the largest and most updated repo of any but by all means, “dozen” hahaha

Are there any decent alternatives to docker hub for pushing images if I’m just a hobbyist?

Folks, the docker runtime is open source, and not even the only one of its kind. They won’t charge for that. If they tried to make it closed source, everyone would just laugh and switch to one of several completely free alternatives. They charge for hosting images, build time on their build servers, and various “premium” developer tools you don’t need. In fact, you need none of this, you can do all of it yourself on whatever hardware you deem to be good enough. There are also many other hosted alternatives out there.

Docker thinks they have a monopoly, for some reason. If you use the technology, you are probably already aware that they don’t.

Does that include running Windows containers? It seems like the alternatives don’t support those.

Windows container runtime is free as well, simply install the docker runtime from chocolatey or winget along with the Windows Containers and Hyper-V windows features. This is what we do on some build machines for CI.

Theres no reason to use desktop other than “ease of use”

There are some reasons. Networking can get messed up, so Docker Desktop “fixed that” for you, but the dirty secret is it’s basically a Linux VM with Docker CE and some convenience network routes.

Youre talking about Linux containers on Windows, I think commenter above was referring to windows containers on Windows, which is its own special hell for lucky folks like me.

Otherwise I totally agree. Ive done both setups without docker desktop.

Are You guys really pulling more than 40 images per hour? Isn’t the free one enough?

A single malfunctioning service that restarts in a loop can exhaust the limit near instantly. And now you can’t bring up any of your services, because you’re blocked.

I’ve been there plenty of times. If you have to rely on docker.io, you better pay up. Running your own NexusRM or Harbor to proxy it can drastically improve your situation though.

Docker is a pile of shit. Steer clear entirely of any of their offerings if possible.

I use docker at home and at work, nexus at work too. I really don’t understand… even a malfunctioning service should not pull the image over and over, there should be a cache… It could be some fringe case, but I have never experienced it.

Ultimately, it doesn’t matter what caused you to be blocked from Docker Hub due to rate-limiting. When you’re in that scenario, it’s most cost efficient to buy your way out.

If you can’t even imagine what would lead up to such a situation, congratulations, because it really sucks.

Yes, there should be a cache. But sometimes people force pull images on service start, to ensure they get the latest “latest” tag. Every tag floats, not just “latest”. Lots of people don’t pin digests in their OCI references. This almost implies wanting to refresh cached tags regularly. Especially when you start critical services, you might pull their tag in case it drifted.

Consider you have multiple hosts in your home lab, all running a good couple services, you roll out that new container runtime upgrade to your network, it resets all caches and restarts all services. Some pulls fail. Some of them are for DNS and other critical services. Suddenly your entire network is down, and you can’t even get on the Internet, because your pihole doesn’t start. You can’t recover, because you’re rate-limited.

I’ve been there a couple of times until I worked on better resilience, but relying on docker.io is still a problem in general. I did pay them for quite some time.

This is only one scenario where their service bit me. As a developer, it gets even more unpleasant, and I’m not talking commercial.

On Lemmy, it’s a sin to make money off your work, especially if it is opensource core projects providing paid infrastructure/support. You can only ask for donations and/or quit. No in-between.

We just build from scratch and pull nothing

Podman or Rancher Desktop

Oh. This is /technology. I thought pants were about to go way up in price for a second.

Podman guys… Podman All the way…

I don’t think you even need Docker licenses to run Linux containers, but unfortunately I need to deal with this because I have some legacy software running in windows containers.

That’s not the point. Maybe you can, but for how long? you will never stop asking the question with docker…

I’m sure “professionals” can afford $4

Oh shit, what would I do without… Scout Analysis?

Recover several hundred GB of disk space, if my team’s experience was any indication.

Anyone looking for a free drop in replacement, I’ve been using Rancher Desktop without any issues https://rancherdesktop.io/

So does this setup like a one-node kubernetes cluster on your local machine or something? I didn’t know that was possible.

Basically yes. Rancher Desktop sets up K3s in a VM and gives you a

kubectl,dockerand a few other binaries preconfigured to talk to that VM. K3s is just a lightweight all-in-one Kubernetes distro that’s relatively easy to set up (of course, you still have to learn Kubernetes so it’s not really easy, just skips the cluster setup).Thanks for the info. For others curious, here’s a decent short intro to K3s.

Now I’m kind of wondering if this is light enough for integration tests.

For integration tests I’d go with kind instead. Use it in my work and it works perfectly in our ci/CD. https://kind.sigs.k8s.io/

I use this as well. I haven’t had any issues.

the enshittification begins…

Begins?!? Docker Inc was waist deep in enshittification the moment they started rate limiting docker hub, which was nearly 3 or 4 years ago.

This is just another step towards the deep end. Companies that could easily move away from docker hub, did so years ago. The companies that remain struggle to leave and will continue to pay.

When that happened our DevOps teams migrated all our prod k8’s to podman, with zero issues. Docker who?

Your choice of container runtime has zero impact on the rate-limits of Docker Hub. They probably had a container image proxy already and just switched because Docker is a security nightmare and needlessly heavy.

Why would anybody use podman for k8s…containerd is the default for years.

Maybe you can run containerd with podman… I haven’t checked. I just run k3s myself.

Yeah, but you don’t need anything besides the runtime with kubernetes. Podman is completely unnecessary since kubelet does the container orchestration based on Kubernetes control plane. Running podman is like running docker, unnecessary attack surface for an API that is not used by anybody (in Kubernetes).

I run k0s at home, FWIW, tried k3s too :)

Yeah I know.

Interesting that you run k0s, hadn’t heard about it. Would you mind giving a quick review and compare it to k3s, pros and cons?

I can’t really make an exhaustive comparison. I think k3s was a little too opinionated for my taste, with lots of rancher logic in it (paths, ingress, etc.). K0s was a little more “bare”, and I had some trouble in the past with k3s with upgrading (encountered some error), while with k0s so far (about 2 years) I never had issues. k0s also has some ansible role that eases operations, I don’t know if now also k3s does. Either way, they are quite similar overall so if one is working for you, rest assured you are not missing out.