A purported leak of 2,500 pages of internal documentation from Google sheds light on how Search, the most powerful arbiter of the internet, operates.

The leaked documents touch on topics like what kind of data Google collects and uses, which sites Google elevates for sensitive topics like elections, how Google handles small websites, and more. Some information in the documents appears to be in conflict with public statements by Google representatives, according to Fishkin and King.

This is the best summary I could come up with:

But how exactly Google ranks websites has long been a mystery, pieced together by journalists, researchers, and people working in search engine optimization.

Now, an explosive leak that purports to show thousands of pages of internal documents appears to offer an unprecedented look under the hood of how Search works — and suggests that Google hasn’t been entirely truthful about it for years.

“While I don’t necessarily fault Google’s public representatives for protecting their proprietary information, I do take issue with their efforts to actively discredit people in the marketing, tech, and journalism worlds who have presented reproducible discoveries.”

Fishkin told The Verge in an email that the company has not disputed the veracity of the leak, but that an employee asked him to change some language in the post regarding how an event was characterized.

The pervasive, often annoying tactics have led to a general narrative that Google Search results are getting worse, crowded with junk that website operators feel required to produce to have their sites seen.

The US government’s antitrust case against Google — which revolves around Search — has also led to internal documentation becoming public, offering further insights into how the company’s main product works.

The original article contains 906 words, the summary contains 200 words. Saved 78%. I’m a bot and I’m open source!

It’s honestly quite strange that this sort of black box system is allowed to exist. How are governments around the world OK with a vast majority of the internet being filtered through a private company’s lens without any sort of insight into how it works? That sounds skeevy as shit.

Better than those governments having control. Ideal scenario is everything is decentralized

Why is that better? It may not be ideal but governments have at least some accountability.

Because that paves a very easy path to corruption . No freaking way do i wanna live in a country where the government has absolute control over all information spread.

Don’t get me wrong, fuck Google, but government control of the Internet just sounds worse

What makes governments any more susceptible to corruption than a private organization?

I’m not actually talking about governments having absolute control. That’s a pretty extreme scenario to jump to from from the question of if it’s better for a private company or a government to control search.

Right now we think Google is misusing that data. We can’t even get information on it without a leak. The government has a flawed FOIA system but Google has nothing of the sort. The only way we’re protected from corruption at Google (and historically speaking several other large private organization) is when the government steps in and stops them.

Governments often handle corruption poorly but I can rattle of many cases where governments managed to reduce corruption on their own (ie without requiring a revolution). In many cases the source of that corruption was large private organizations.

You make some good points. But consider this. This data was publicly leaked by hackers. These hackers, if we go by precedent, will probably get away Scott free. sure it was very difficult to find this data, but not impossible. On the other hand a government if faced with a breach like this, would probably find the hackers and detain them as threats to national security, as we’ve seen with Edward Snowden.

Though our system isn’t perfect, i think that having a corrupt Google is better than a corrupt government in this case. As you said, Google can be corrupt, but the government can step in and take over, whereas, if a government decides that it’s access to citizens data is important enough, they can continue with corruption with less resistance. I mean, who guards the guards right?

No it’s not better.

I want a federated social bookmarking site. Not for news or discussion of recent stuff, but to keep some good sites in your account and to share with others.

Searching those and getting results with attached upvotes/downvotes would be ideal

Was going to say that I was dreaming of such platform, but then it can be used for more than just links, and work as a decentralized Usenet, and what’s more important, as a rating system potentially more resilient to abuse (by bots or by people whose votes you don’t care about). Then noticed that you wrote “federated”.

So you want Reddit? We have a federated clone of that. It’s Lemmy.

Does Google still have a search algorithm? I thought they now just feed everything into a huge LLM and let it regurgitate statistically plausible answers.

Honestly I hope this bites them hard. They’ve done way way worse to small businesses and competition for decades now.

I guess we are going to be in for more SEO spam than usual if this document is accurate. But I think its good that we are finally going to get a better understanding how Google manipulates people with the algorithm.

So a win-lose situation.

Heh. Now I can look forward to a new browser plugin that automatically jumps to the first page of Google results that contains any entry that isn’t a Viagra ad. Huge time saver!

More seo spam? What isn’t already?

Yeah, what isn’t SEO spam—Search Engine Optimization spam, SEO marketing, keyword stuffing, Google keyword stuffing, backlink building, best backlinks, best backlinks for backlink building

Who wants to take bets that Search itself ends up in The Graveyard soon, leaving nothing but the new AI abomination in place?

More likely they will just slowly rebrand search to more AI type things. Then slowly retire the non-AI parts in the background.

Can’t wait for selfhosted web search to become better.

You mean hosting your own crawler/indexer? That doesn’t really sound like a thing you could do cost-effectively.

You could use Common Crawl, it’s run by a non profit

No problem we crowdsource the crawling torrent style.

We outsourced that to google for reasonnable performance reason. But they shit the bed so now there’s no choice but to do it ourselves.

Federated bookmarks?

Federated directories. We’re going back to Yahoo like it’s 1995

Webrings!!!

Uh…I know we’re all just having fun here, but I need to be part of a webring again. If anyone is more than joking, I kinda need to know about it. Thanks.

there are tons of webring still going these days!

Seriously? Cool. I’m going to go do some research then. And maybe entirely change the purpose of my blog, just to fit into one…

<under_construction.gif>

I loved Geocities!

I’m so ready for something like this. I’ve cleaned up my bookmarks and been waiting for alternatives to search engines.

Right!

Before his company was able to block more of Microsoft’s own tracking scripts, DuckDuckGo CEO and founder Gabriel Weinberg explained in a Reddit reply why firms like his weren’t going the full DIY route:

“… [W]e source most of our traditional links and images privately from Bing … Really only two companies (Google and Microsoft) have a high-quality global web link index (because I believe it costs upwards of a billion dollars a year to do), and so literally every other global search engine needs to bootstrap with one or both of them to provide a mainstream search product. The same is true for maps btw – only the biggest companies can similarly afford to put satellites up and send ground cars to take streetview pictures of every neighborhood.”

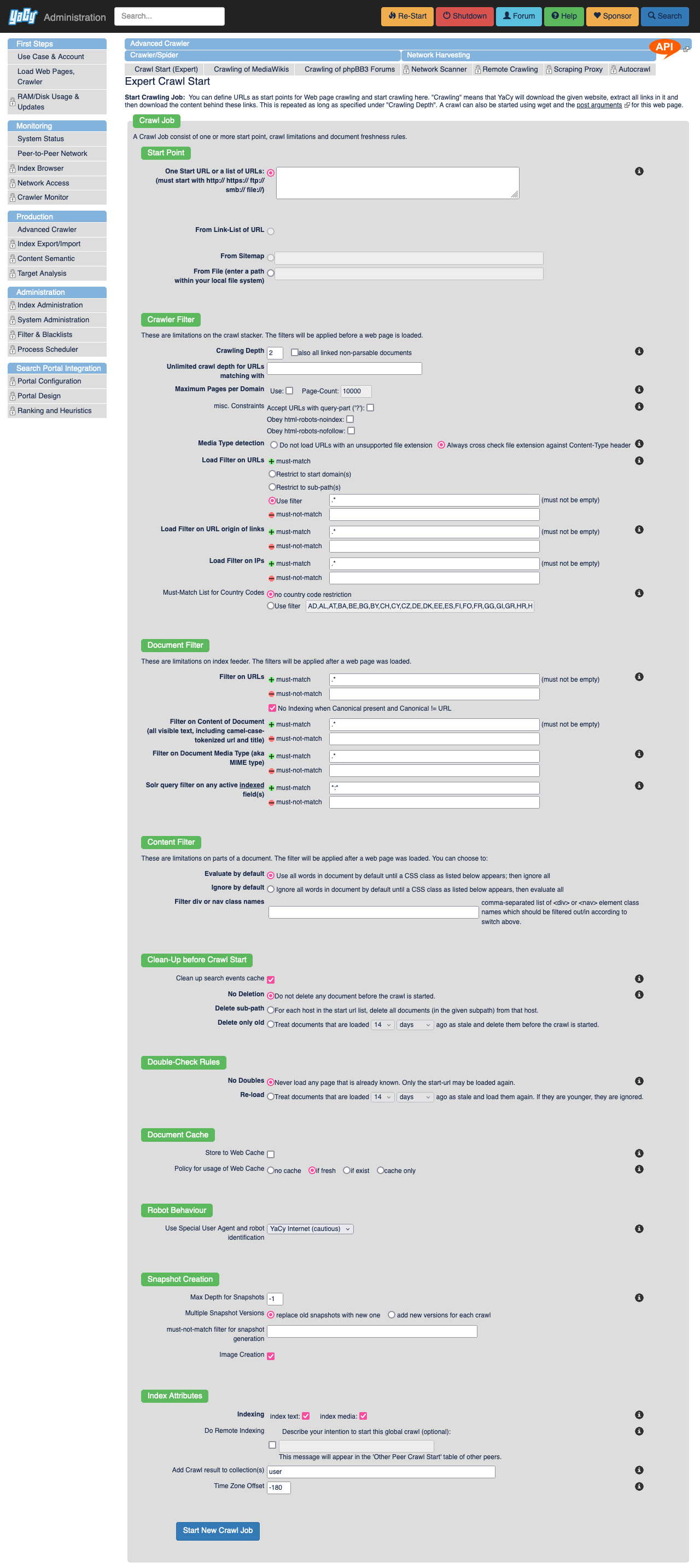

Look up the yacy repo in github

Surprisingly, it’s very doable, requires basic technical knowledge and relatively minimal computing resources (runs in the background on your computer).

I have tampermonkey script that sends yacy to crawl any websites that I visit, and it’s keeping up relatively good index for personal use of the visited websites. Combine yacy with ~300gb of Kiwix databases, add searxng as a frontend and you have pretty strong self hosted search engine.

Of course you need to supplement your searches from other search engines, as yacy does not crawl the whole web, just what you tell it to.

I encourage anyone who’s even slightly interested on this stuff to try Yacy, it’s ancient piece of software, but it still works very well and is not an abandoned project yet!

–

I personally use Yacy mostly on private mode, but it does have the distributed network there as well.

Yeah, I guess the P2P component sort of solves part of the issue I was imagining by distributing indexes and crawling. I was thinking that people were trying to run all of Google on a raspberry pi at home.

This is interesting, have you had it index reddit? I’m just wondering how much storage space the database takes up.

Hi!

Great question! I don’t crawl reddit, but this applies to other large sites as well. reddit themselves they have at this very moment banned the ip range where I host my Yacy at (Hetzner). I just looked up from my index that I do have 257k pages indexed from reddit through teddit I used to run, this is from before reddit api-enshittification, going to delete those right now.

And the way how the crawling is done is you define crawling depth, which limits how much content is crawled from the site.

- 0 crawling depth = only the page you send Yacy to, nothing more.

- 1 crawling depth = all the links on the page you send Yacy to

- 2 crawling depth = all links on the page you send Yacy to, and all links on the pages crawled…

- 3 …

- n …

… etc.

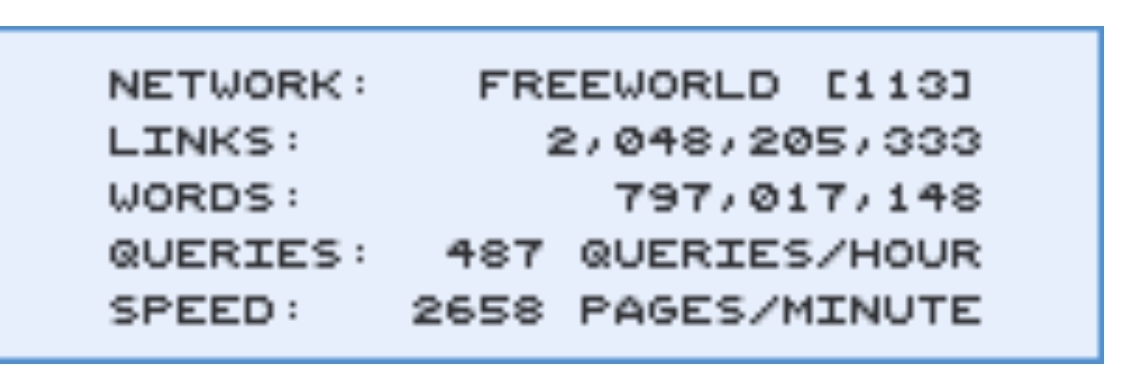

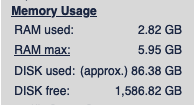

I have my tampermonkey scripts set to only crawling depth of 1 at the moment (Just set them to 2 actually, kinda curious how much more I will be crawling), I’ve manually crawled some local news sites as a curiosity at the beginning. And my database is currently relatively small, only around ~86.38 gigabytes according to Yacy. This stores aproximately 2.6 million documents in Yacy’s Solr.

–

Yacy has tons of options for crawling, so you can customize how much it crawls and even filter out overly large sites with maximum number of documents set when you send Yacy there.

Large picture of Yacy's interface for starting a crawl.

–

The tampermonkey script I’ve been talking about in these posts, it’s very simple script: https://github.com/JeremyRand/YaCyIndexerGreasemonkey

Hit me up if you guys have more questions! I’m by no means an expert on Yacy, but I will do my best to answer.

rubs hands together

Someone got a torrent for us?

Some information in the documents appears to be in conflict with public statements by Google representatives

I would have never guessed that.

At this point if you are not assuming that corporation is pretty much lying for convenience. you aint operating in reality haha

Yep but I’ll add my two cents, half is lying and half is guessfull ignorance because nobody really knows how big and old systems really work.

No one reaches a position like Google’s without knowing to the atom every knook and crany of their systems.

Eeeeh you overestimate the capacity of people to learn what someone being fired knows

Here is an alternative Piped link(s):

Piped is a privacy-respecting open-source alternative frontend to YouTube.

I’m open-source; check me out at GitHub.

Where can one get a hold of these documents?

This appears to be the original blog post, but I’m not finding a way to download this. https://sparktoro.com/blog/an-anonymous-source-shared-thousands-of-leaked-google-search-api-documents-with-me-everyone-in-seo-should-see-them/

Is this not leaked past this one person?

Edit 2: No, these appear to be normal public docs.

Edit: seems these are the docs? https://hexdocs.pm/google_api_content_warehouse/0.4.0/GoogleApi.ContentWarehouse.V1.Model.QualityNavboostCrapsCrapsData.html

Grab it while it’s still up: https://github.com/yoshi-code-bot/elixir-google-api/commit/d7a637f4391b2174a2cf43ee11e6577a204a161e

Wait why is that commit still up if this is a data leak?

It’s not a data leak, it’s a a leak of internal documentation in a google api client which supposedly contains “leaks” of how the google algorithm might works, e.g. the existence of domain authority attribute that google denied for years. I haven’t actually dig in to see if its really a leak or was overblown though.

Internal documentation leaking is still a data leak, it’s just a subset of a data leak.

If it was sensitive information that commit would have been purged by now. The original PR (on the Google Clients repo) has no mention of problems, and there are no issues of discussions around rewriting the git history on that item.

This makes me think this isn’t actually a problem.

My org is less practiced on operational security than Google and we would purge that information within minutes of any of us hearing about it. And this has been on blog posts for a while now.

Rand Fishkin, who worked in SEO for more than a decade, says a source shared 2,500 pages of documents with him with the hopes that reporting on the leak would counter the “lies” that Google employees had shared about how the search algorithm works.

And I supposed to care that the poor SEO assholes that need to get their ads more visibility weren’t being given all the instructions on how to do that by the search engine?

And I supposed to care that the poor SEO assholes that need to get their ads more visibility weren’t being given all the instructions on how to do that by the search engine?

No. You’re supposed to care that a company is pointlessly* lying, thus it’s extremely likely to deceive, mislead and lie when it gets some benefit out of it.

In other words: SEO arseholes can ligma, Google is lying to you and me too.

*I say “pointlessly” because not disclosing info would achieve practically the same result as lying.

Tell me you don’t know shit about SEO without telling me you don’t know shit about SEO.

Just because there are people who do bad things doesn’t mean the industry is bad or have bad intentions. SEO isn’t ads. Advertorials can be a tactic of SEO, but it’s not SEO as a whole. Same with clickbait because it works, and I guarantee you also fall for it constantly.

SEO is about understanding what someone needs and creating an experience to ensure that someone finds the answer to what they need through content and/or a product to solve their needs.

This can be achieved through copywriting, researching search trends and queries, technical analysis of websites and how they render, providing guidance on helpful assets (photos, pdfs, videos, form, copy, etc), PR outreach because links are how people move around online or discover things, social planning because social media are a form of search engines, and more.

And finally, SEOs are not responsible for how Google treats shit. That’s Google who is responsible. Google is the one that tweaks the algorithm and doesn’t catch spammy shit. In fact many SEOs catch it and report it to Google’s reps, but they are the ones who can ensure the right team(s) fix the issue.

wait what is “social planning” and how is it different from conventional marketing on social media. That seems pretty far removed from search engines

need to get their ads more visibility

I occasionally encounter the desire for a search engine to surface non-advertisement content :)

Now if they lied to advertisers and told small bloggers, reputable news agencies, fediverse admins, etc. the insider secrets… now we’re talkin’!

Historically, Google had a give-and-take with SEO. You can’t make SEO companies go away, but you can curb the worst behavior. Google used to punish bad behavior with a poor listing, and you had to do some work to get it back into compliance and tell Google it’s fixed up.

It wasn’t ideal, but it functioned well enough.

The drive to make search more profitable over the past few years seems to have meant dropping this. SEO companies can get away with whatever. If they now have the whole manual, game over. Google of a decade ago might have done something about it. Google of today won’t bother.

Now, where to download these, for science.

Google it.

Use Bing 😅😅